AI literacy for small teams what should actually be documented by August 2026

AI Literacy for Small Teams is no longer a future compliance issue. If your company already uses ChatGPT, Copilot or similar tools in everyday work, the topic is already operationally relevant under the EU AI Act.

This article is written for founder-led businesses, small B2B teams and other smaller organisations in Europe that want a clearer operational view of what Article 4 means in practice.

The discussion around AI literacy often gets distorted in two directions at once. One side treats it like a purely legal issue that can be postponed until enforcement feels closer. The other side treats it like a reason to build an oversized governance structure before the organisation even understands how AI is actually being used.

For most small teams, both reactions miss the point. The real issue is simpler and more operational. Once AI starts shaping written communication, client-facing material, summaries, internal decisions, workflows or repetitive tasks, the business needs a more explicit internal position. Not because every company suddenly needs a policy empire, but because unclear AI use is usually a trust, quality and responsibility problem long before it becomes a formal enforcement problem.

The practical question is not “Do we look compliant?” It is: can we explain which AI tools are being used, what the real risks are, what guidance applies internally, and who has actually been briefed on what?

Why this matters now and not later

The AI literacy obligation under Article 4 is already live. That matters because many smaller organisations are already using AI in ordinary day-to-day work, often without naming it as such. Drafting text, rewriting copy, translating content, summarising meetings, preparing outlines, classifying information or supporting simple automations can all bring AI into real operational workflows.

Once that happens, the organisation is no longer dealing with a speculative future topic. It is dealing with the quality and governance of work that already exists. That is why postponing the issue until August 2026 is strategically weak. August 2026 is not the start of the obligation. It is the point where weak preparation starts looking harder to defend.

AI Literacy for Small Teams is less about formal governance language and more about making everyday AI use understandable, reviewable and properly documented.

What often goes wrong first in practice

AI use spreads informally, people rely on broad assumptions, and internal review standards stay unwritten. The result is usually not immediate disaster. It is thinner judgment, weaker traceability and avoidable confusion.

Why small teams should care even without legal drama

For smaller businesses, unclear AI use can weaken trust, create sloppy internal habits, and make external communication more fragile long before anyone talks about penalties.

What AI Literacy for Small Teams actually means

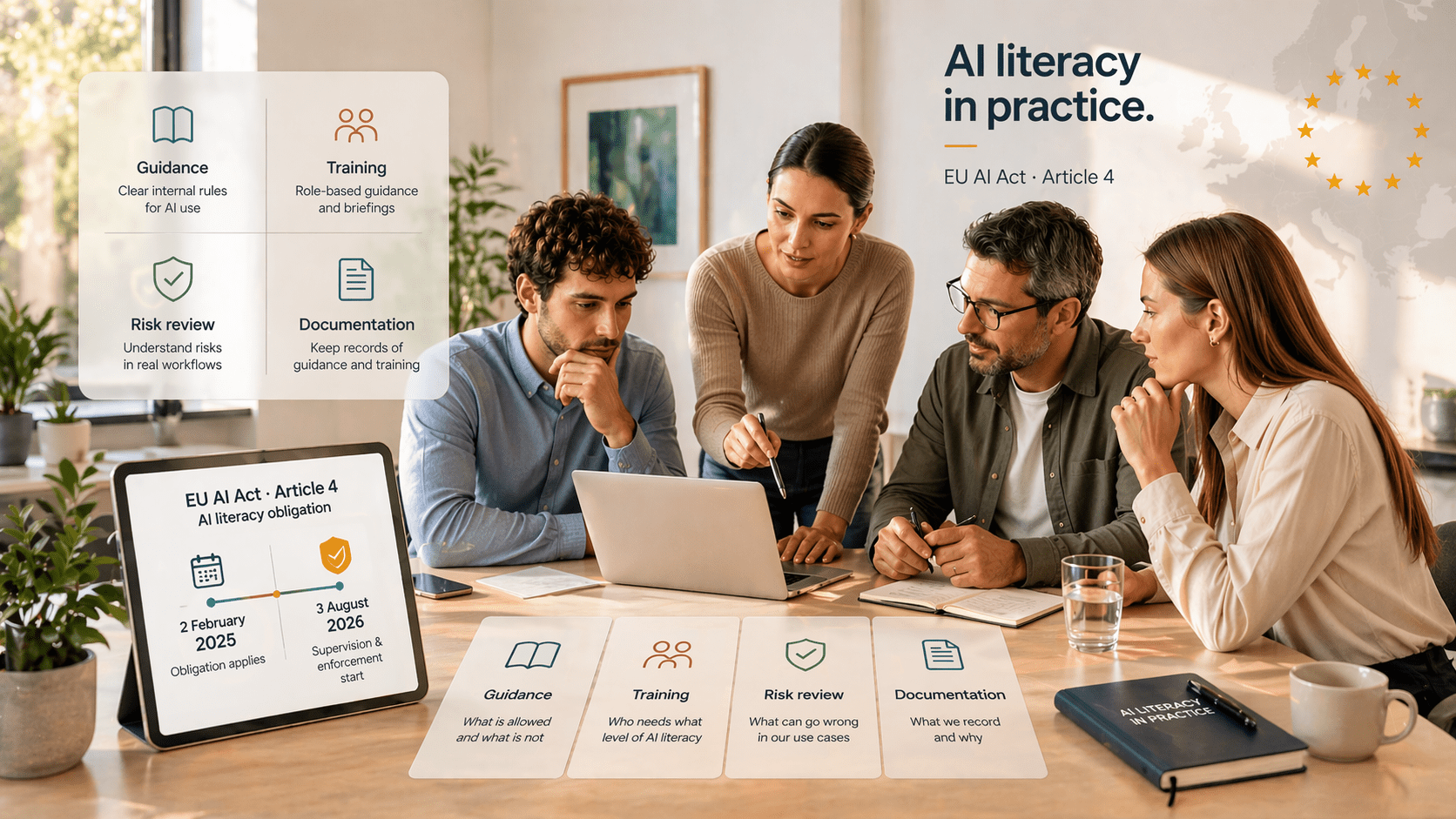

In plain terms, Article 4 requires providers and deployers of AI systems to take measures to ensure a sufficient level of AI literacy among staff and other persons dealing with AI systems on their behalf. The Commission’s own Q&A makes the practical logic quite clear: organisations should consider their role, the AI systems they use, the risks associated with those systems, the knowledge level of the people involved, and the context in which the systems are used.

That does not mean every employee needs the same training. It also does not mean there is one mandatory format that every organisation has to copy. The Commission explicitly rejects a one-size-fits-all model. That flexibility is useful, but it also removes a common excuse. Small teams cannot hide behind the idea that nothing can be done until someone gives them a standard corporate template.

In practice, a small team should be able to answer four questions without guessing:

- Which AI tools are actually being used in the organisation?

- For which real tasks and workflows are they used?

- Which risks are materially relevant in those use cases?

- What internal guidance or review rules apply to that usage?

If those answers still depend on vague assumptions or scattered personal habits, then the company’s AI use is likely running ahead of its internal discipline.

One point is worth stating plainly: reading vendor documentation alone is usually too weak. The Commission says directly that relying only on instructions for use or simply telling staff to read them may be ineffective and insufficient.

Who is usually in scope inside a smaller organisation

Most small businesses are not providers of their own AI systems. They are mainly deployers, meaning they use systems built by others. That still places them inside the scope of Article 4. In fact, the Commission explicitly addresses normal everyday usage, including employees using tools such as ChatGPT for writing advertising text or translating text.

It is also important not to think too narrowly about who counts. In smaller teams, AI-assisted work is often done not only by employees but also by freelancers, external specialists or contractors acting on the organisation’s behalf. For founder-led teams, that is normal operating reality, not an edge case.

What small teams should actually document by August 2026

The useful approach is not “document everything”. It is “document the minimum credible set of things that reflects reality”. That is a much better standard for a smaller organisation.

1. A real overview of the AI tools in use

Not a bloated register. A real one. Which tools are being used, by whom, for what kinds of tasks, and in which parts of the business? If the organisation cannot answer that without improvising, then the basis for everything else is weak.

2. A simple role classification

Is the organisation mainly using third-party AI systems? Is it embedding AI into its own delivery? Is it both? For most small teams this is not difficult to describe, but it should still be written down. Otherwise later decisions about risk, literacy and responsibility become vague very quickly.

3. A short risk logic for actual use cases

Not “AI is risky” in the abstract. Real workflow-level risks. Hallucinations. Weak summaries. Misleading translations. Overconfident drafting. Sensitive data exposure. Unchecked client-facing claims. The aim is not to sound dramatic. The aim is to sound accurate.

4. Internal guidance that people can actually follow

This is where many teams stay too vague. It should be clear what is allowed, what must be reviewed by a human, what should never be copied out unverified, what should not be put into certain tools, and where additional care is required. If the only real instruction is “use common sense”, then the internal standard is still underdeveloped.

5. A basic record of briefings, trainings or guidance initiatives

The Commission is clear here: there is no need for a certificate. Internal records of trainings and other guiding initiatives can be enough. That is good news for smaller organisations. It means the standard is practical. But it still means there should be some traceable record of who received which guidance and when.

6. Different depth for different roles

Not everyone needs the same level of detail. A founder, a marketer, an assistant and an external contractor may all use AI differently and carry different risks. Treating them identically may be administratively tidy, but it is not especially credible.

- A current list of AI tools actually in use

- Named business purposes and workflow context for each relevant use case

- A short risk logic linked to real tasks, not abstract slogans

- Plain internal guidance for review, verification and data handling

- A usable record of briefings, training or guidance

- Role-sensitive expectations rather than one generic message for everyone

What small teams should not overbuild just to look serious

This is where organisations often waste time. Article 4 does not require a theatrical governance machine. It does not require a ceremonial AI Officer. It does not require certificate collecting for its own sake. And it does not require a long internal policy that nobody uses during actual work.

That does not mean structure is irrelevant. It means the structure should be proportionate. The stronger move is usually a shorter, more truthful system: document real usage, define practical guidance, record actual briefings, and review the material when tools or workflows change.

The goal is not performative governance. The goal is a calmer and more explainable operating layer around AI use.

Key dates worth keeping straight without legal fog

What the practical minimum looks like for a smaller business

If your team already uses ChatGPT, Copilot or similar tools, the practical minimum is not especially glamorous. But it is sane. A real overview of current AI use. A short internal note on business role and context. A risk-based view of the main use cases. Plain-language guidance. A record of internal briefings. And someone who actually owns the upkeep of that material.

That will not impress people who like inflated governance language. It will, however, put a small team in a much more credible position than either denial or overengineering.

Why this also matters beyond compliance for trust and quality

The reason this topic matters commercially is simple. AI use shapes output. Output shapes trust. And trust is one of the hardest things to rebuild once weak habits become visible.

For smaller teams especially, AI literacy is not only about regulation. It is also about operational clarity. If the company cannot explain how AI is used, reviewed and bounded internally, then that lack of clarity tends to show up elsewhere too: in weaker communication, looser standards and more fragile judgment.

That is also why this topic fits naturally into broader questions around documentation, workflow clarity and privacy-aware digital operations rather than sitting in a purely legal corner. Done well, AI literacy is not a side project. It becomes part of a more mature operating posture.

A sensible next step if your team is already using AI

If AI is already present in everyday work but your internal guidance is still scattered, unwritten or overly dependent on personal judgment, that gap is worth fixing now.

In practice, AI Literacy for Small Teams means having clear guidance, a realistic view of risk, and a usable internal record of how AI is actually used across the business.

A useful place to start is a short internal AI use note, a simple workflow-level risk review, and a lightweight briefing record. If your broader issue is that content, internal operations and trust signals are drifting apart, related pages such as AI-assisted content workflows or privacy-first stack setup are a better next read than generic AI hype pieces.

Short FAQ for small teams

Do we need a formal AI certificate?

No. The Commission explicitly says there is no need for a certificate. Internal records of trainings or other guidance initiatives can be sufficient.

Do we need to appoint an AI Officer?

No specific governance structure is mandated for Article 4 compliance. What matters more is that someone actually owns the guidance and documentation in practice.

Can we just rely on vendor documentation?

Usually not. The Commission says that simply relying on instructions for use or telling staff to read them may be ineffective and insufficient.

Does this only apply if we build our own AI product?

No. It also matters for normal day-to-day use of third-party tools such as ChatGPT when those tools shape real business work.

Conclusion without the fluff

The AI literacy obligation is already part of operating reality for teams that use AI in everyday work. For small businesses, the right answer is usually not more bureaucracy. It is more clarity, more usable guidance and better documentation tied to real workflows.

Teams that do this well will not necessarily be the ones with the most decorative policy packs. More often, they will be the ones that can calmly explain which AI tools they use, where the risks are, what internal guidance exists, and how that guidance is kept relevant over time.

Related reading and source links

- European Commission: AI Literacy – Questions & Answers

- EUR-Lex: Regulation (EU) 2024/1689 (AI Act)

- Nomadic Filmworks: AI-assisted content workflows

- Nomadic Filmworks: Privacy-first stack setup

- Nomadic Filmworks: Frequently asked questions

This article is a practical business-oriented interpretation for small teams and is not legal advice.